Top AI Coding Agents and Development Platforms in 2026: Atoms, Devin, Windsurf, Cursor, Warp, and More Compared

Source: MarkTechPost Software development has changed. Engineers no longer type most code by hand. They describe intent, and...

Anthropic Releases Claude Fable 5 and Claude Mythos 5: Same Underlying Model, Different Safeguards, New Mythos-Class Tier

Source: MarkTechPost Anthropic released two models on June 9, 2026: Claude Fable 5 and Claude Mythos 5. Both...

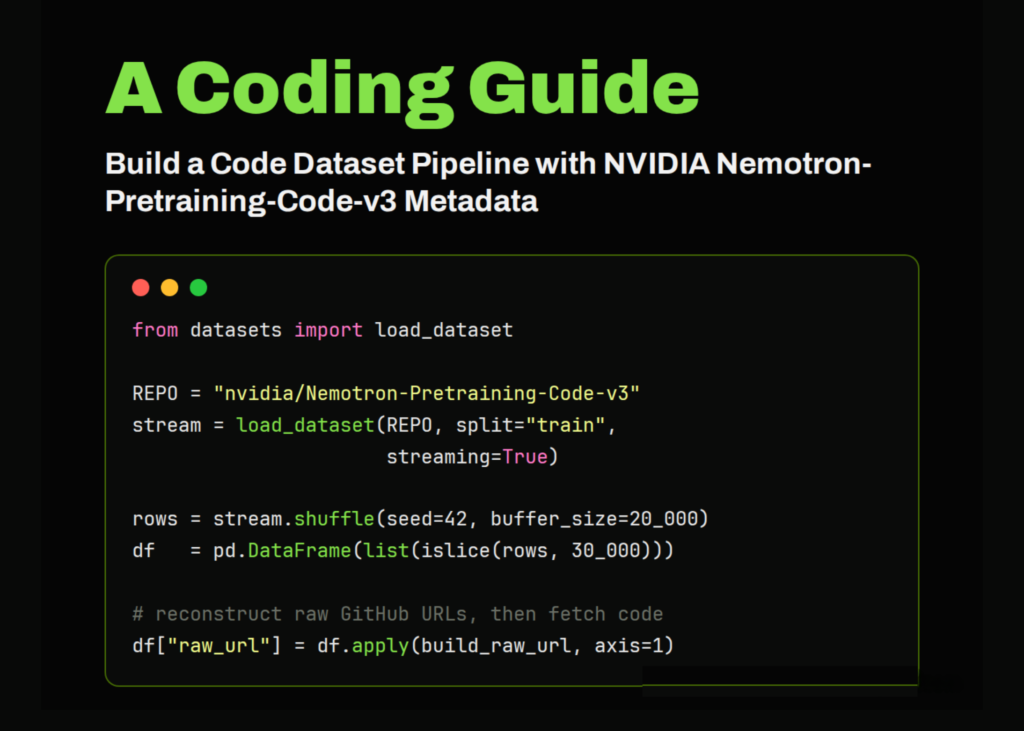

Building a Code Dataset Pipeline from NVIDIA Nemotron-Pretraining-Code-v3 Metadata with Streaming, Pandas, and tiktoken

Source: MarkTechPost In this tutorial, we work with NVIDIA’s Nemotron-Pretraining-Code-v3 dataset as a large-scale metadata index for code...

Startup’s nuclear-inspired cooling system could make data centers more sustainable

Source: MIT News – Artificial intelligence The rise of artificial intelligence is riding on the back of an...

The consequences of relying on AI for accurate news

Source: MIT News – Artificial intelligence It’s no secret that the last few years have seen a massive...

Google Releases Gemini 3.5 Live Translate, a Streaming Speech-to-Speech Audio Model Covering 70+ Languages Across Meet, Translate, and the Live API

Source: MarkTechPost Google just announced Gemini 3.5 Live Translate. It is their latest audio model for live speech-to-speech...

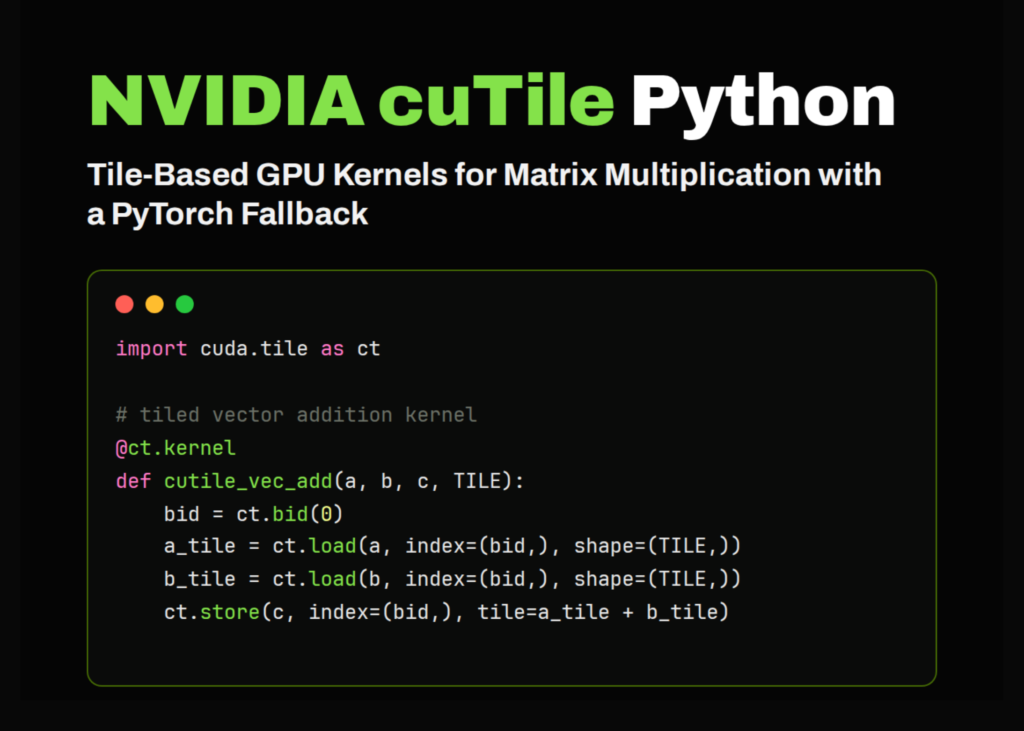

NVIDIA cuTile Python Tutorial: Building Tiled GPU Kernels for Vector Addition, Matrix Addition, and Matrix Multiplication in Colab

Source: MarkTechPost In this tutorial, we implement an advanced hands-on workflow for NVIDIA cuTile Python, a tile-based GPU...

A New Study from Harvard and Perplexity Finds AI Agents Perform 26 Minutes of Autonomous Work per Session vs 33 Seconds for Search

Source: MarkTechPost A new working research from Perplexity and Harvard offers field evidence on what AI agents do...

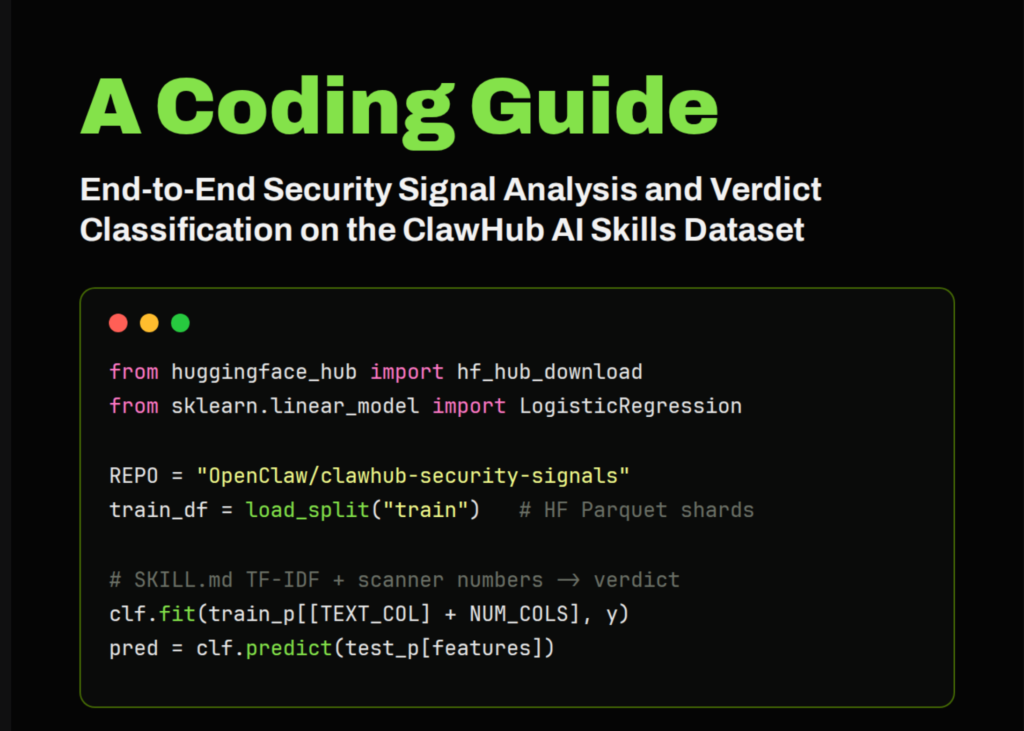

ClawHub Security Signals: A Coding Guide to End-to-End Security Signal Analysis and Verdict Classification on the AI Skills Dataset

Source: MarkTechPost In this tutorial, we use the ClawHub Security Signals dataset to examine how different security scanners...

Xiaomi MiMo and TileRT Push a 1-Trillion-Parameter Model Past 1000 Tokens Per Second on Commodity GPUs

Source: MarkTechPost Inference speed is becoming a competitive metric for large language models. Xiaomi’s MiMo team just released...