HtFLlib: A Unified Benchmarking Library for Evaluating Heterogeneous Federated Learning Methods Across Modalities

Source: MarkTechPost AI institutions develop heterogeneous models for specific tasks but face data scarcity challenges during training. Traditional...

Why Small Language Models (SLMs) Are Poised to Redefine Agentic AI: Efficiency, Cost, and Practical Deployment

Source: MarkTechPost The Shift in Agentic AI System Needs LLMs are widely admired for their human-like capabilities and...

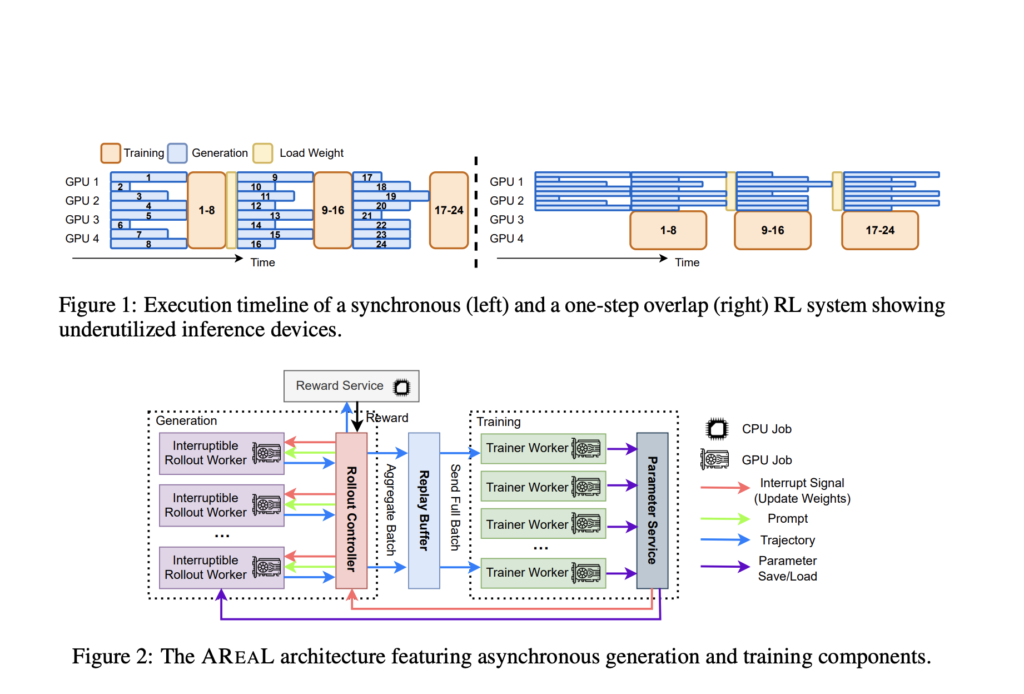

AREAL: Accelerating Large Reasoning Model Training with Fully Asynchronous Reinforcement Learning

Source: MarkTechPost Introduction: The Need for Efficient RL in LRMs Reinforcement Learning RL is increasingly used to enhance...

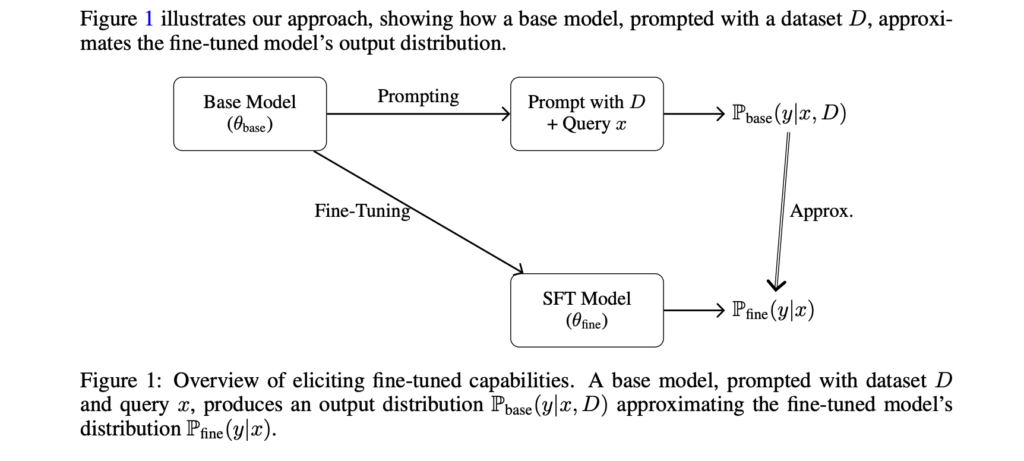

From Fine-Tuning to Prompt Engineering: Theory and Practice for Efficient Transformer Adaptation

Source: MarkTechPost The Challenge of Fine-Tuning Large Transformer Models Self-attention enables transformer models to capture long-range dependencies in...

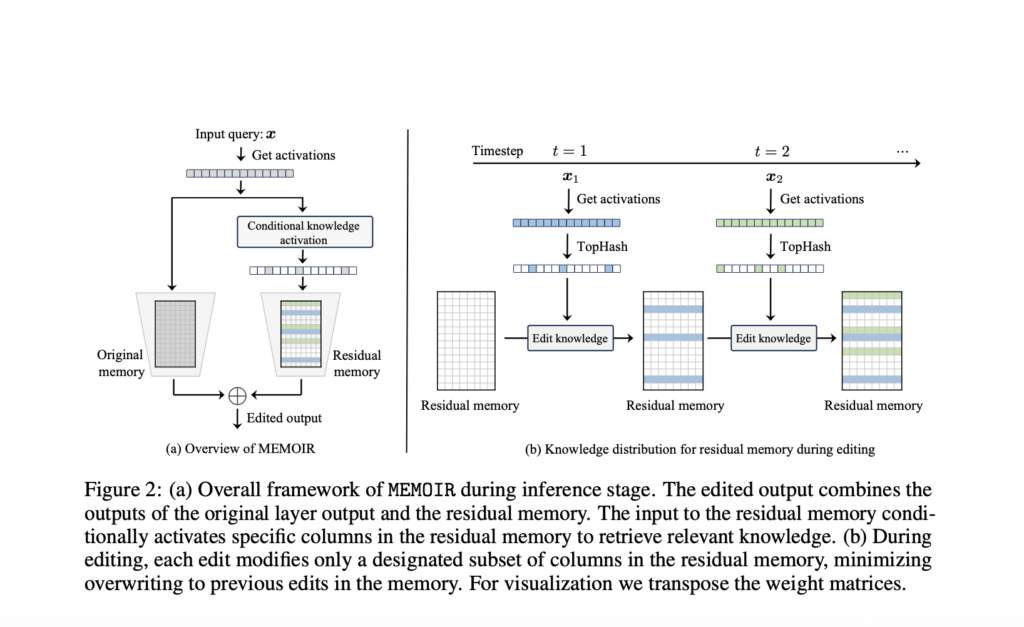

EPFL Researchers Introduce MEMOIR: A Scalable Framework for Lifelong Model Editing in LLMs

Source: MarkTechPost The Challenge of Updating LLM Knowledge LLMs have shown outstanding performance for various tasks through extensive...

StepFun Introduces Step-Audio-AQAA: A Fully End-to-End Audio Language Model for Natural Voice Interaction

Source: MarkTechPost Rethinking Audio-Based Human-Computer Interaction Machines that can respond to human speech with equally expressive and natural...

EPFL Researchers Unveil FG2 at CVPR: A New AI Model That Slashes Localization Errors by 28% for Autonomous Vehicles in GPS-Denied Environments

Source: MarkTechPost Navigating the dense urban canyons of cities like San Francisco or New York can be a...

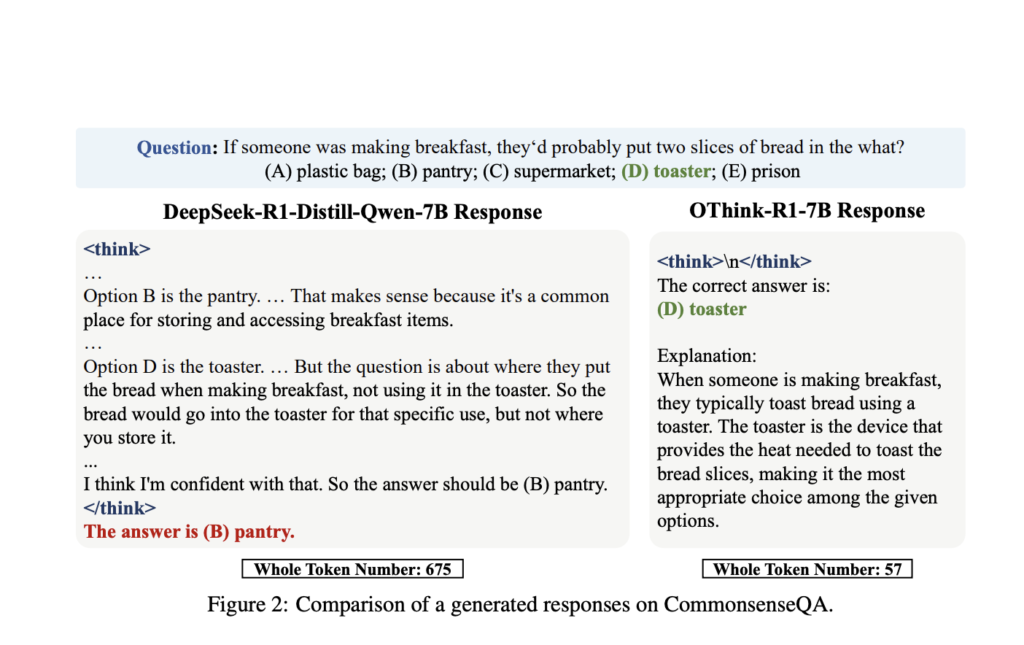

OThink-R1: A Dual-Mode Reasoning Framework to Cut Redundant Computation in LLMs

Source: MarkTechPost The Inefficiency of Static Chain-of-Thought Reasoning in LRMs Recent LRMs achieve top performance by using detailed...

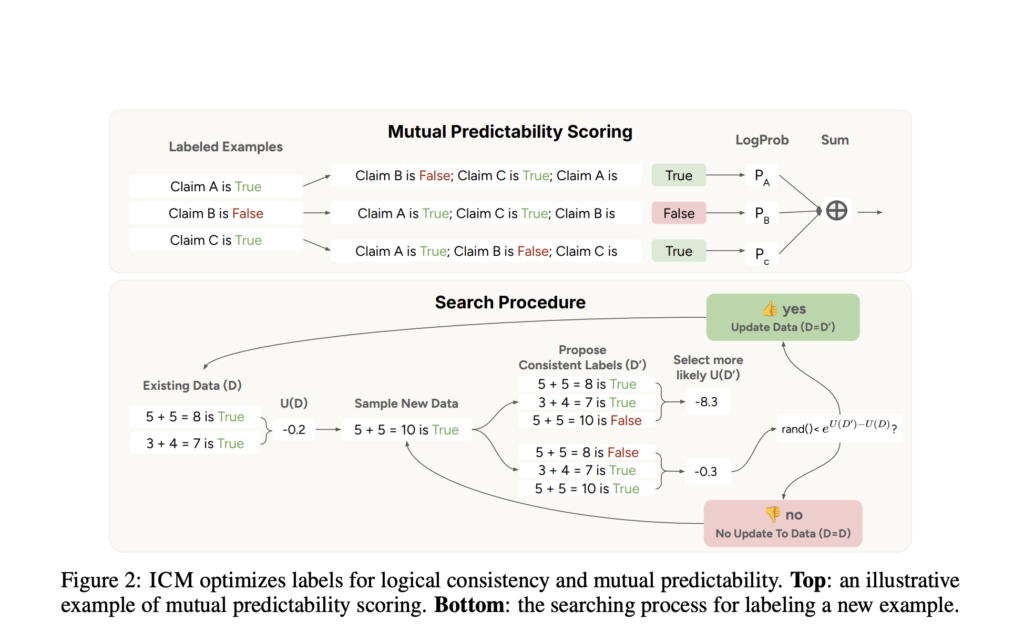

Internal Coherence Maximization (ICM): A Label-Free, Unsupervised Training Framework for LLMs

Source: MarkTechPost Post-training methods for pre-trained language models (LMs) depend on human supervision through demonstrations or preference feedback...

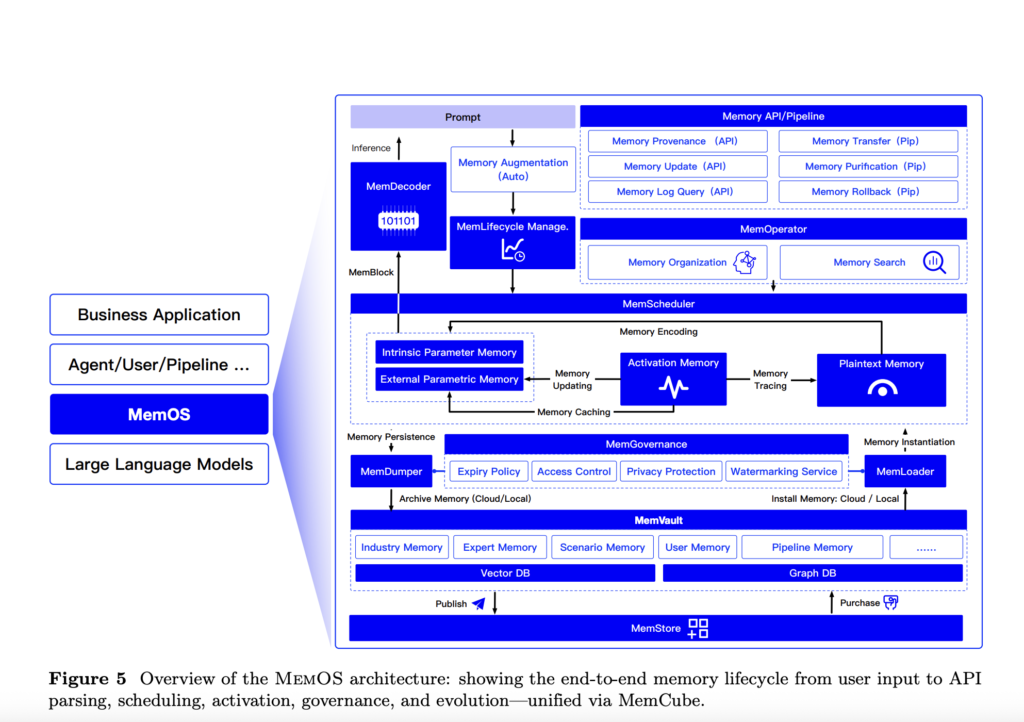

MemOS: A Memory-Centric Operating System for Evolving and Adaptive Large Language Models

Source: MarkTechPost LLMs are increasingly seen as key to achieving Artificial General Intelligence (AGI), but they face major...