A Step by Step Guide to Build an Interactive Health Data Monitoring Tool Using Hugging Face Transformers and Open Source Model Bio_ClinicalBERT

Source: MarkTechPost In this tutorial, we will learn how to build an interactive health data monitoring tool using...

Hugging Face Releases OlympicCoder: A Series of Open Reasoning AI Models that can Solve Olympiad-Level Programming Problems

Source: MarkTechPost In the realm of competitive programming, both human participants and artificial intelligence systems encounter a set...

Limbic AI’s Generative AI–Enabled Therapy Support Tool Improves Cognitive Behavioral Therapy Outcomes

Source: MarkTechPost Recent advancements in generative AI are creating exciting new possibilities in healthcare, especially within mental health...

From Genes to Genius: Evolving Large Language Models with Nature’s Blueprint

Source: MarkTechPost Large language models (LLMs) have transformed artificial intelligence with their superior performance on various tasks, including...

Reka AI Open Sourced Reka Flash 3: A 21B General-Purpose Reasoning Model that was Trained from Scratch

Source: MarkTechPost In today’s dynamic AI landscape, developers and organizations face several practical challenges. High computational demands, latency...

Implementing Text-to-Speech TTS with BARK Using Hugging Face’s Transformers library in a Google Colab environment

Source: MarkTechPost Text-to-Speech (TTS) technology has evolved dramatically in recent years, from robotic-sounding voices to highly natural speech...

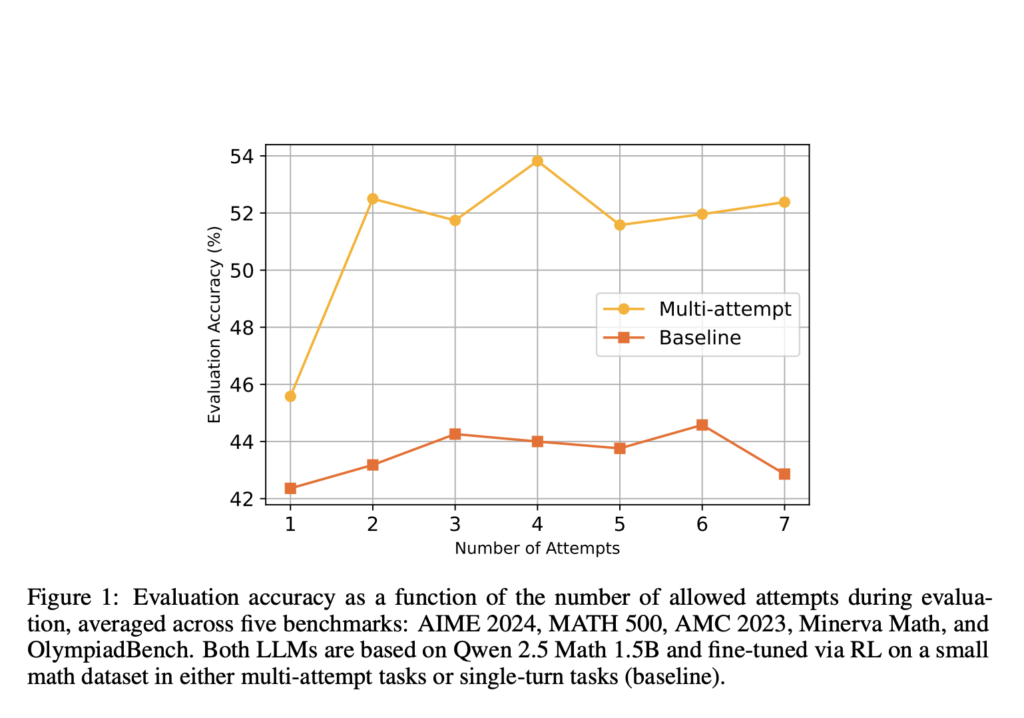

Enhancing LLM Reasoning with Multi-Attempt Reinforcement Learning

Source: MarkTechPost Recent advancements in RL for LLMs, such as DeepSeek R1, have demonstrated that even simple question-answering...

This AI Paper Introduces RL-Enhanced QWEN 2.5-32B: A Reinforcement Learning Framework for Structured LLM Reasoning and Tool Manipulation

Source: MarkTechPost Large reasoning models (LRMs) employ a deliberate, step-by-step thought process before arriving at a solution, making...

STORM (Spatiotemporal TOken Reduction for Multimodal LLMs): A Novel AI Architecture Incorporating a Dedicated Temporal Encoder between the Image Encoder and the LLM

Source: MarkTechPost Understanding videos with AI requires handling sequences of images efficiently. A major challenge in current video-based...

What if You Could Control How Long a Reasoning Model “Thinks”? CMU Researchers Introduce L1-1.5B: Reinforcement Learning Optimizes AI Thought Process

Source: MarkTechPost Reasoning language models have demonstrated the ability to enhance performance by generating longer chain-of-thought sequences during...