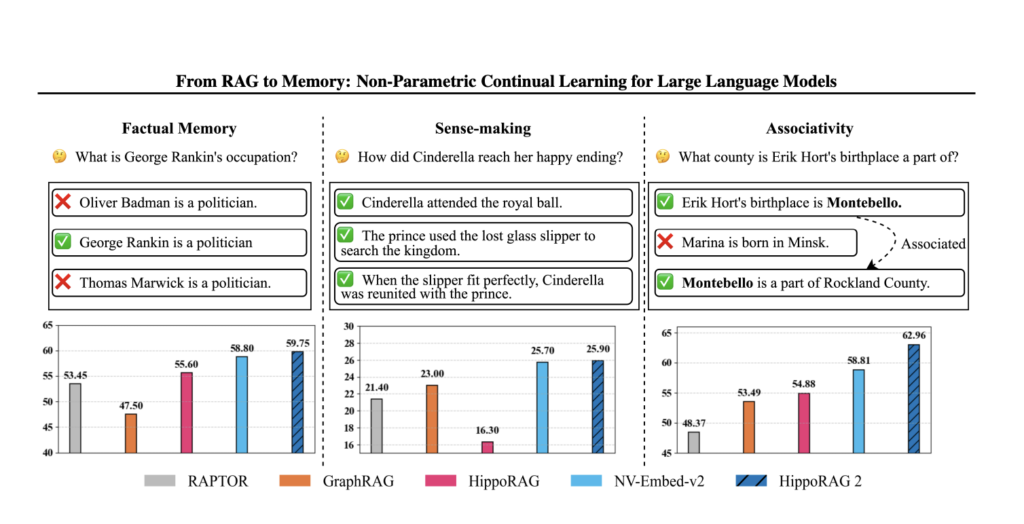

HippoRAG 2: Advancing Long-Term Memory and Contextual Retrieval in Large Language Models

Source: MarkTechPost LLMs face challenges in continual learning due to the limitations of parametric knowledge retention, leading to...

DeepSeek AI Releases Smallpond: A Lightweight Data Processing Framework Built on DuckDB and 3FS

Source: MarkTechPost Modern data workflows are increasingly burdened by growing dataset sizes and the complexity of distributed processing....

MedHELM: A Comprehensive Healthcare Benchmark to Evaluate Language Models on Real-World Clinical Tasks Using Real Electronic Health Records

Source: MarkTechPost Large Language Models (LLMs) are widely used in medicine, facilitating diagnostic decision-making, patient sorting, clinical reporting,...

Researchers from UCLA, UC Merced and Adobe propose METAL: A Multi-Agent Framework that Divides the Task of Chart Generation into the Iterative Collaboration among Specialized Agents

Source: MarkTechPost Creating charts that accurately reflect complex data remains a nuanced challenge in today’s data visualization landscape....

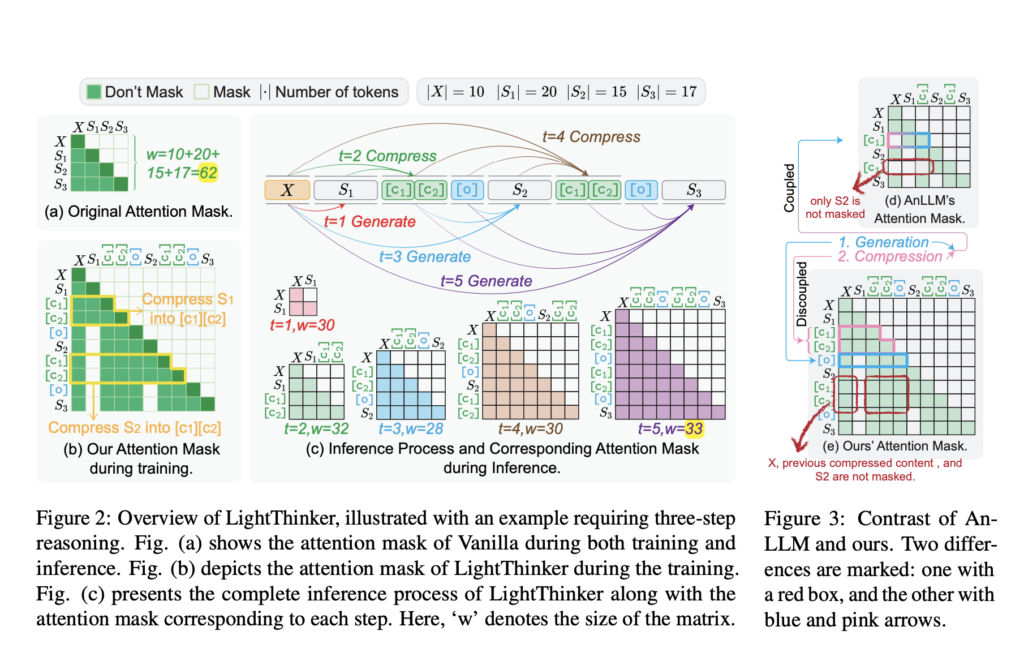

LightThinker: Dynamic Compression of Intermediate Thoughts for More Efficient LLM Reasoning

Source: MarkTechPost Methods like Chain-of-Thought (CoT) prompting have enhanced reasoning by breaking complex problems into sequential sub-steps. More...

Self-Rewarding Reasoning in LLMs: Enhancing Autonomous Error Detection and Correction for Mathematical Reasoning

Source: MarkTechPost LLMs have demonstrated strong reasoning capabilities in domains such as mathematics and coding, with models like...

DeepSeek’s Latest Inference Release: A Transparent Open-Source Mirage?

Source: MarkTechPost DeepSeek’s recent update on its DeepSeek-V3/R1 inference system is generating buzz, yet for those who value...

Stanford Researchers Uncover Prompt Caching Risks in AI APIs: Revealing Security Flaws and Data Vulnerabilities

Source: MarkTechPost The processing requirements of LLMs pose considerable challenges, particularly for real-time uses where fast response time...

A-MEM: A Novel Agentic Memory System for LLM Agents that Enables Dynamic Memory Structuring without Relying on Static, Predetermined Memory Operations

Source: MarkTechPost Current memory systems for large language model (LLM) agents often struggle with rigidity and a lack...

Microsoft AI Released LongRoPE2: A Near-Lossless Method to Extend Large Language Model Context Windows to 128K Tokens While Retaining Over 97% Short-Context Accuracy

Source: MarkTechPost Large Language Models (LLMs) have advanced significantly, but a key limitation remains their inability to process...