This AI Paper from Menlo Research Introduces AlphaMaze: A Two-Stage Training Framework for Enhancing Spatial Reasoning in Large Language Models

Source: MarkTechPost Artificial intelligence continues to advance in natural language processing but still faces challenges in spatial reasoning...

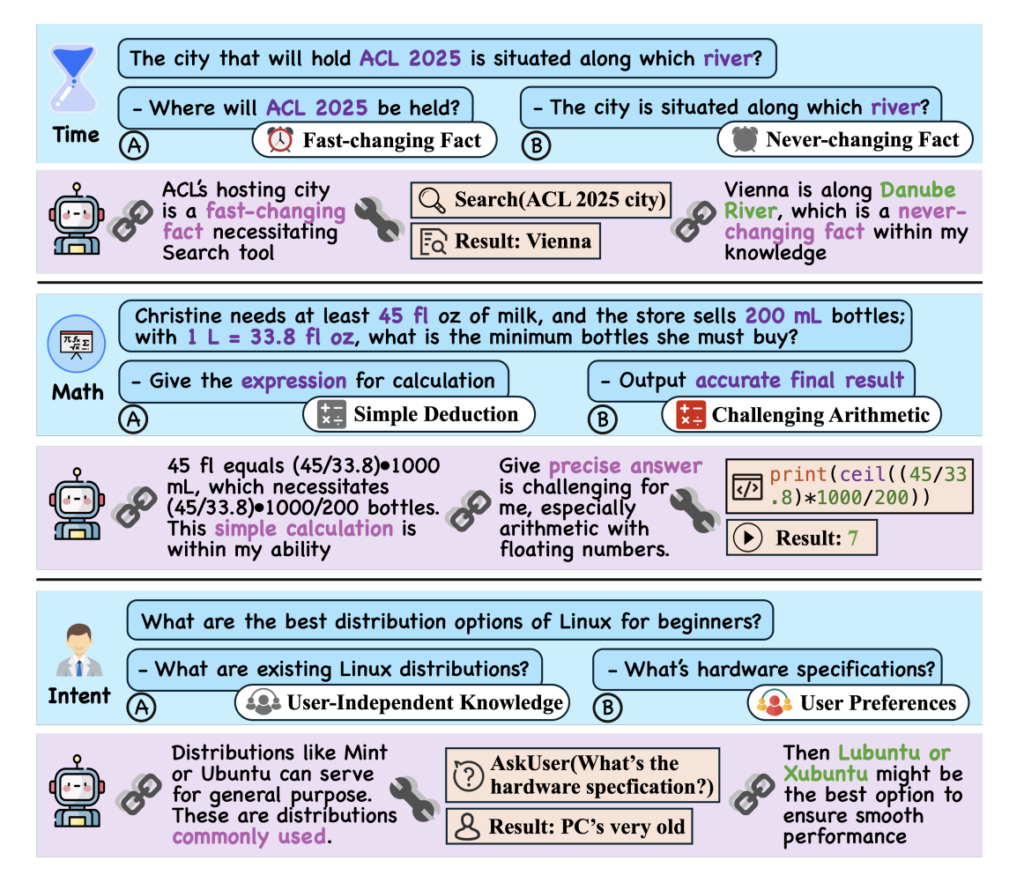

Optimizing LLM Reasoning: Balancing Internal Knowledge and Tool Use with SMART

Source: MarkTechPost Recent advancements in LLMs have significantly improved their reasoning abilities, enabling them to perform text composition,...

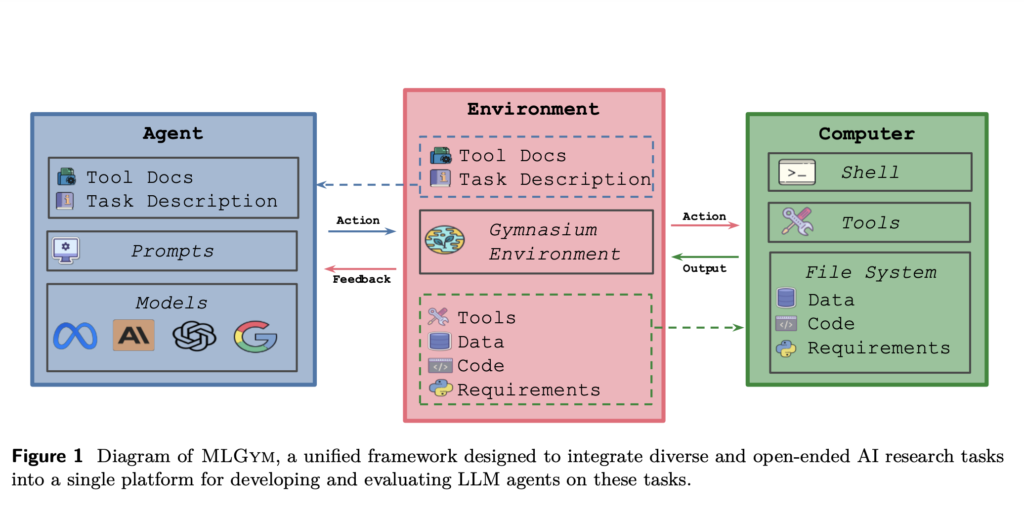

Meta AI Introduces MLGym: A New AI Framework and Benchmark for Advancing AI Research Agents

Source: MarkTechPost The ambition to accelerate scientific discovery through AI has been longstanding, with early efforts such as...

Microsoft Researchers Introduces BioEmu-1: A Deep Learning Model that can Generate Thousands of Protein Structures Per Hour on a Single GPU

Source: MarkTechPost Proteins are the essential component behind nearly all biological processes, from catalyzing reactions to transmitting signals...

Building a Legal AI Chatbot: A Step-by-Step Guide Using bigscience/T0pp LLM, Open-Source NLP Models, Streamlit, PyTorch, and Hugging Face Transformers

Source: MarkTechPost In this tutorial, we will build an efficient Legal AI CHatbot using open-source tools. It provides...

Optimizing Training Data Allocation Between Supervised and Preference Finetuning in Large Language Models

Source: MarkTechPost Large Language Models (LLMs) face significant challenges in optimizing their post-training methods, particularly in balancing Supervised...

This AI Paper from Weco AI Introduces AIDE: A Tree-Search-Based AI Agent for Automating Machine Learning Engineering

Source: MarkTechPost The development of high-performing machine learning models remains a time-consuming and resource-intensive process. Engineers and researchers...

What are AI Agents? Demystifying Autonomous Software with a Human Touch

Source: MarkTechPost In today’s digital landscape, technology continues to advance at a steady pace. One development that has...

Moonshot AI and UCLA Researchers Release Moonlight: A 3B/16B-Parameter Mixture-of-Expert (MoE) Model Trained with 5.7T Tokens Using Muon Optimizer

Source: MarkTechPost Training large language models (LLMs) has become central to advancing artificial intelligence, yet it is not...

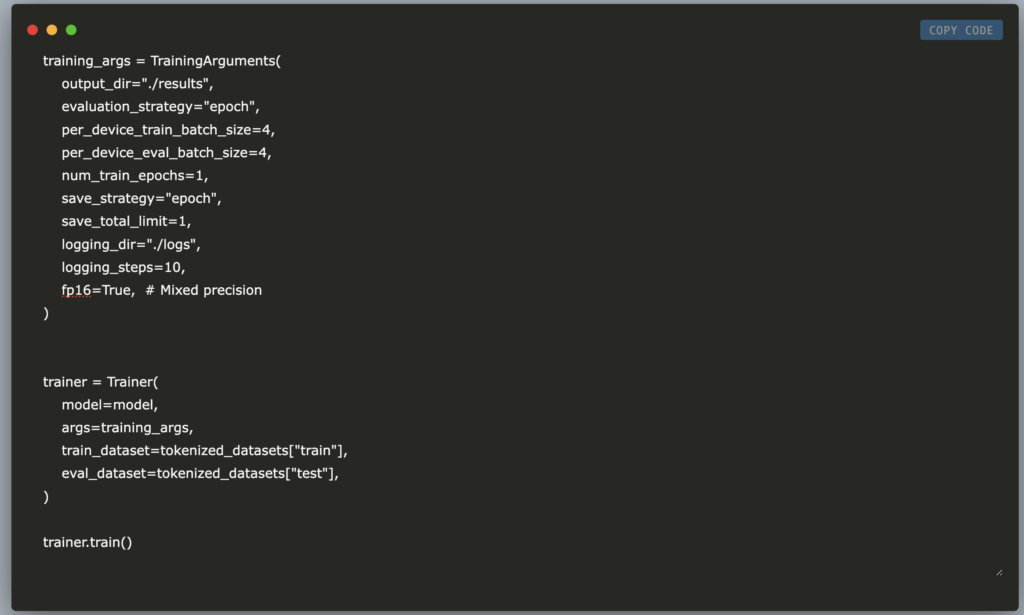

Fine-Tuning NVIDIA NV-Embed-v1 on Amazon Polarity Dataset Using LoRA and PEFT: A Memory-Efficient Approach with Transformers and Hugging Face

Source: MarkTechPost In this tutorial, we explore how to fine-tune NVIDIA’s NV-Embed-v1 model on the Amazon Polarity dataset...