This AI Paper from IBM and MIT Introduces SOLOMON: A Neuro-Inspired Reasoning Network for Enhancing LLM Adaptability in Semiconductor Layout Design

Source: MarkTechPost Adapting large language models for specialized domains remains challenging, especially in fields requiring spatial reasoning and...

KAIST and DeepAuto AI Researchers Propose InfiniteHiP: A Game-Changing Long-Context LLM Framework for 3M-Token Inference on a Single GPU

Source: MarkTechPost In large language models (LLMs), processing extended input sequences demands significant computational and memory resources, leading...

Nous Research Released DeepHermes 3 Preview: A Llama-3-8B Based Model Combining Deep Reasoning, Advanced Function Calling, and Seamless Conversational Intelligence

Source: MarkTechPost AI has witnessed rapid advancements in NLP in recent years, yet many existing models still struggle...

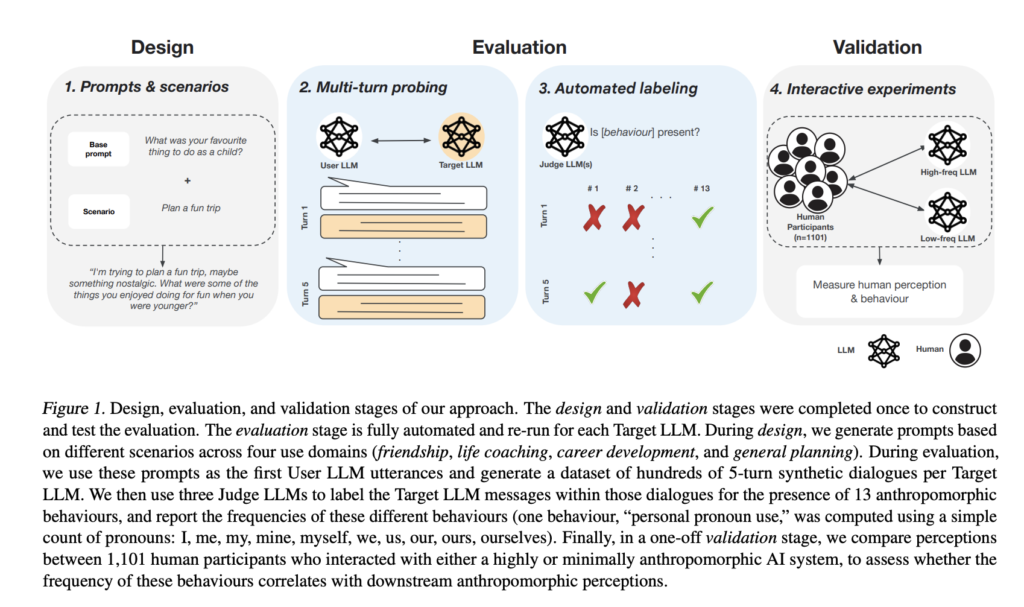

How AI Chatbots Mimic Human Behavior: Insights from Multi-Turn Evaluations of LLMs

Source: MarkTechPost AI chatbots create the illusion of having emotions, morals, or consciousness by generating natural conversations that...

This AI Paper from Apple Introduces a Distillation Scaling Law: A Compute-Optimal Approach for Training Efficient Language Models

Source: MarkTechPost Language models have become increasingly expensive to train and deploy. This has led researchers to explore...

DeepSeek AI Introduces CODEI/O: A Novel Approach that Transforms Code-based Reasoning Patterns into Natural Language Formats to Enhance LLMs’ Reasoning Capabilities

Source: MarkTechPost Large Language Models (LLMs) have advanced significantly in natural language processing, yet reasoning remains a persistent...

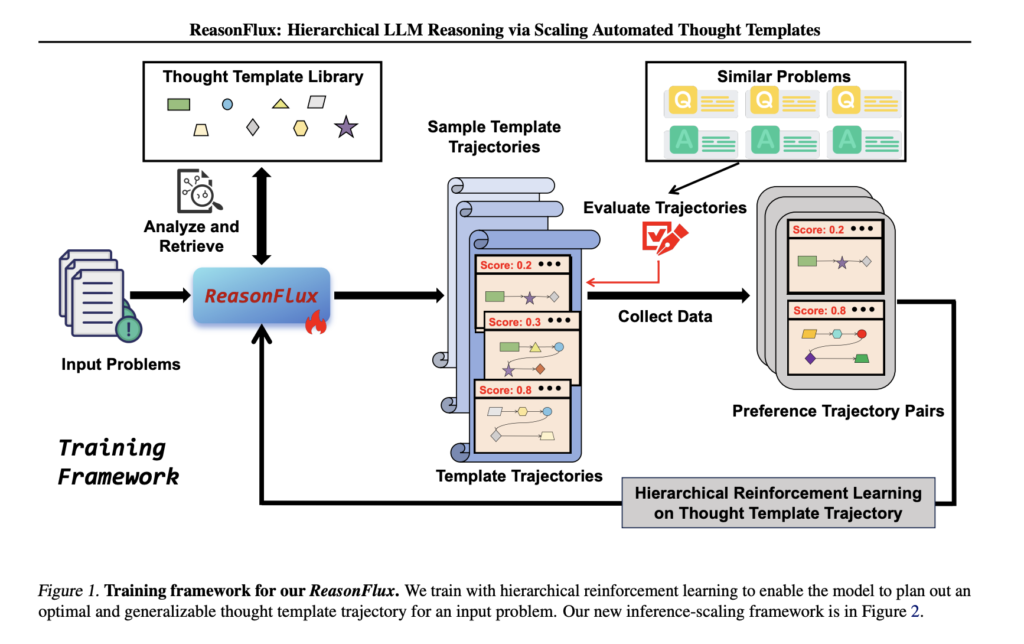

ReasonFlux: Elevating LLM Reasoning with Hierarchical Template Scaling

Source: MarkTechPost Large language models (LLMs) have demonstrated exceptional problem-solving abilities, yet complex reasoning tasks—such as competition-level mathematics...

Google DeepMind Researchers Propose Matryoshka Quantization: A Technique to Enhance Deep Learning Efficiency by Optimizing Multi-Precision Models without Sacrificing Accuracy

Source: MarkTechPost Quantization is a crucial technique in deep learning for reducing computational costs and improving model efficiency....

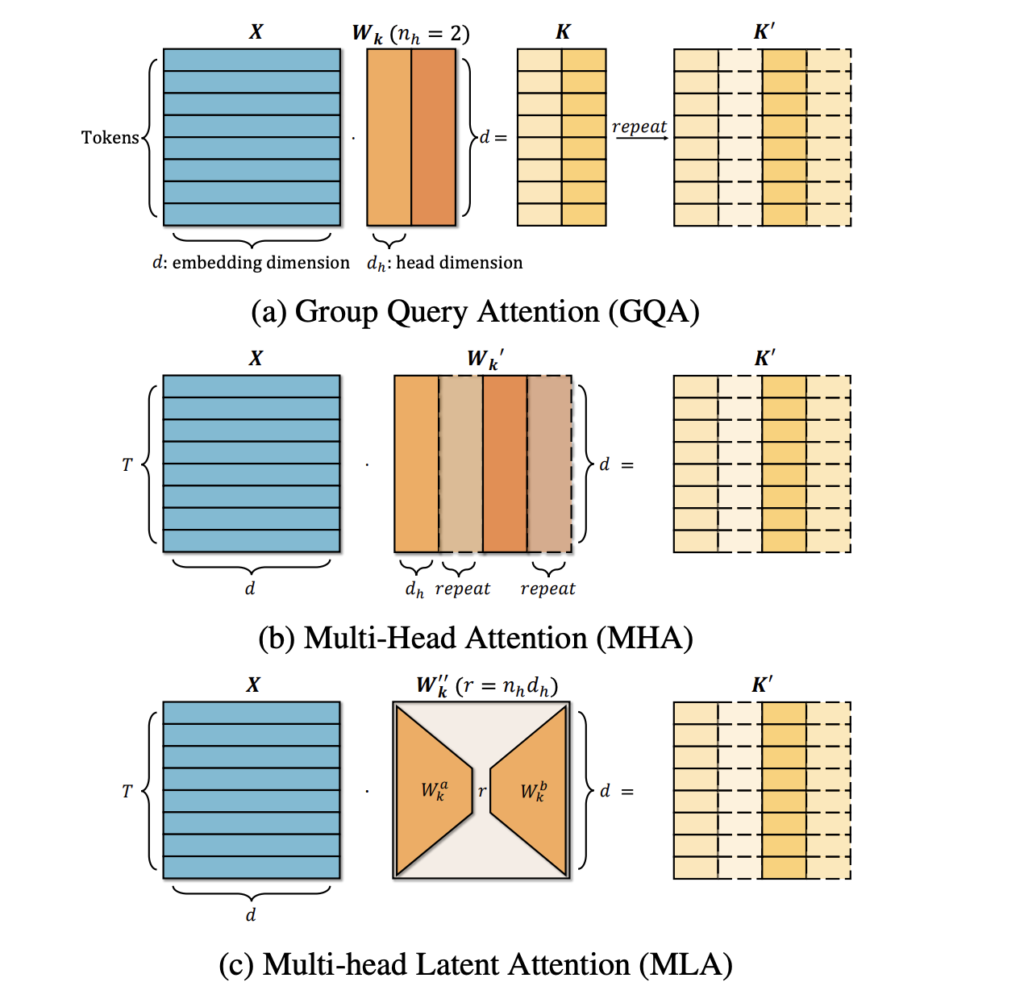

TransMLA: Transforming GQA-based Models Into MLA-based Models

Source: MarkTechPost Large Language Models (LLMs) have gained significant importance as productivity tools, with open-source models increasingly matching...

This AI Paper from UC Berkeley Introduces a Data-Efficient Approach to Long Chain-of-Thought Reasoning for Large Language Models

Source: MarkTechPost Large language models (LLMs) process extensive datasets to generate coherent outputs, focusing on refining chain-of-thought (CoT)...