Microsoft Research Releases OptiMind: A 20B Parameter Model that Turns Natural Language into Solver Ready Optimization Models

Source: MarkTechPost Microsoft Research has released OptiMind, an AI based system that converts natural language descriptions of complex...

Nous Research Releases NousCoder-14B: A Competitive Olympiad Programming Model Post-Trained on Qwen3-14B via Reinforcement Learning

Source: MarkTechPost Nous Research has introduced NousCoder-14B, a competitive olympiad programming model that is post trained on Qwen3-14B...

A Coding Guide to Understanding How Retries Trigger Failure Cascades in RPC and Event-Driven Architectures

Source: MarkTechPost In this tutorial, we build a hands-on comparison between a synchronous RPC-based system and an asynchronous...

Vercel Releases Agent Skills: A Package Manager For AI Coding Agents With 10 Years of React and Next.js Optimisation Rules

Source: MarkTechPost Vercel has released agent-skills, a collection of skills that turns best practice playbooks into reusable skills...

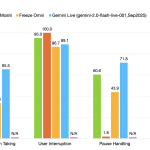

NVIDIA Releases PersonaPlex-7B-v1: A Real-Time Speech-to-Speech Model Designed for Natural and Full-Duplex Conversations

Source: MarkTechPost NVIDIA Researchers released PersonaPlex-7B-v1, a full duplex speech to speech conversational model that targets natural voice...

![black-forest-labs-releases-flux.2-[klein]:-compact-flow-models-for-interactive-visual-intelligence](https://aifuturefront.com/wp-content/uploads/2026/01/31588-black-forest-labs-releases-flux-2-klein-compact-flow-models-for-interactive-visual-intelligence.png)

Black Forest Labs Releases FLUX.2 [klein]: Compact Flow Models for Interactive Visual Intelligence

Source: MarkTechPost Black Forest Labs releases FLUX.2 [klein], a compact image model family that targets interactive visual intelligence...

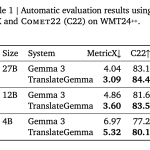

Google AI Releases TranslateGemma: A New Family of Open Translation Models Built on Gemma 3 with Support for 55 Languages

Source: MarkTechPost Google AI has released TranslateGemma, a suite of open machine translation models built on Gemma 3...

NVIDIA AI Open-Sourced KVzap: A SOTA KV Cache Pruning Method that Delivers near-Lossless 2x-4x Compression

Source: MarkTechPost As context lengths move into tens and hundreds of thousands of tokens, the key value cache...

DeepSeek AI Researchers Introduce Engram: A Conditional Memory Axis For Sparse LLMs

Source: MarkTechPost Transformers use attention and Mixture-of-Experts to scale computation, but they still lack a native way to...

At MIT, a continued commitment to understanding intelligence

Source: MIT News – Artificial intelligence The MIT Siegel Family Quest for Intelligence (SQI), a research unit in...