Salesforce AI Research Introduces Reward-Guided Speculative Decoding (RSD): A Novel Framework that Improves the Efficiency of Inference in Large Language Models (LLMs) Up To 4.4× Fewer FLOPs

Source: MarkTechPost In recent years, the rapid scaling of large language models (LLMs) has led to extraordinary improvements...

Layer Parallelism: Enhancing LLM Inference Efficiency Through Parallel Execution of Transformer Layers

Source: MarkTechPost LLMs have demonstrated exceptional capabilities, but their substantial computational demands pose significant challenges for large-scale deployment....

ByteDance Introduces UltraMem: A Novel AI Architecture for High-Performance, Resource-Efficient Language Models

Source: MarkTechPost Large Language Models (LLMs) have revolutionized natural language processing (NLP) but face significant challenges in practical...

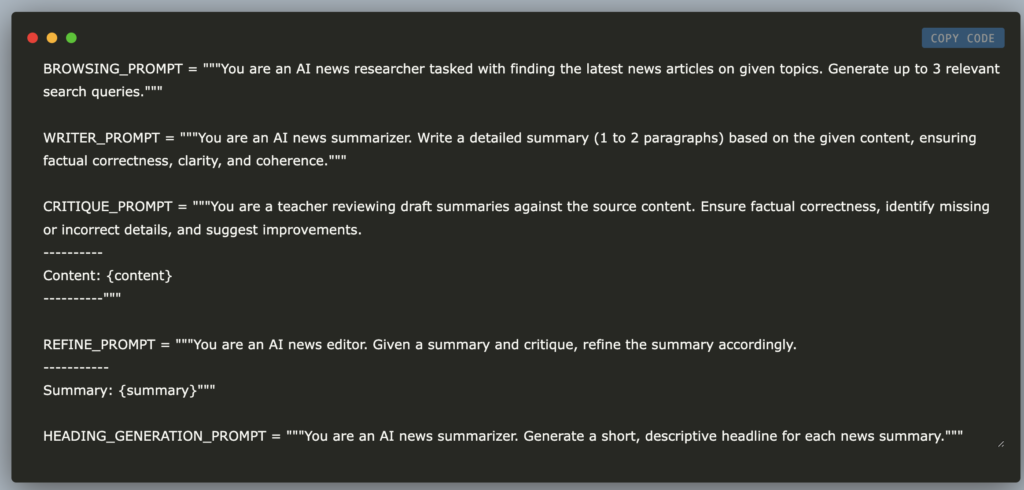

Step by Step Guide on How to Build an AI News Summarizer Using Streamlit, Groq and Tavily

Source: MarkTechPost Introduction In this tutorial, we will build an advanced AI-powered news agent that can search the...

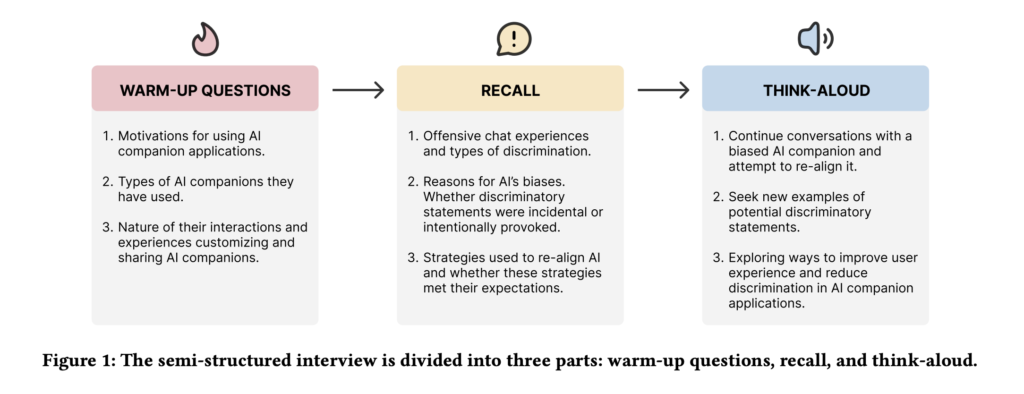

Can Users Fix AI Bias? Exploring User-Driven Value Alignment in AI Companions

Source: MarkTechPost Large language model (LLM)–based AI companions have evolved from simple chatbots into entities that users perceive...

Google DeepMind Research Introduces WebLI-100B: Scaling Vision-Language Pretraining to 100 Billion Examples for Cultural Diversity and Multilingualit

Source: MarkTechPost Machines learn to connect images and text by training on large datasets, where more data helps...

Meta AI Introduces CoCoMix: A Pretraining Framework Integrating Token Prediction with Continuous Concepts

Source: MarkTechPost The dominant approach to pretraining large language models (LLMs) relies on next-token prediction, which has proven...

Anthropic AI Launches the Anthropic Economic Index: A Data-Driven Look at AI’s Economic Role

Source: MarkTechPost Artificial Intelligence is increasingly integrated into various sectors, yet there is limited empirical evidence on its...

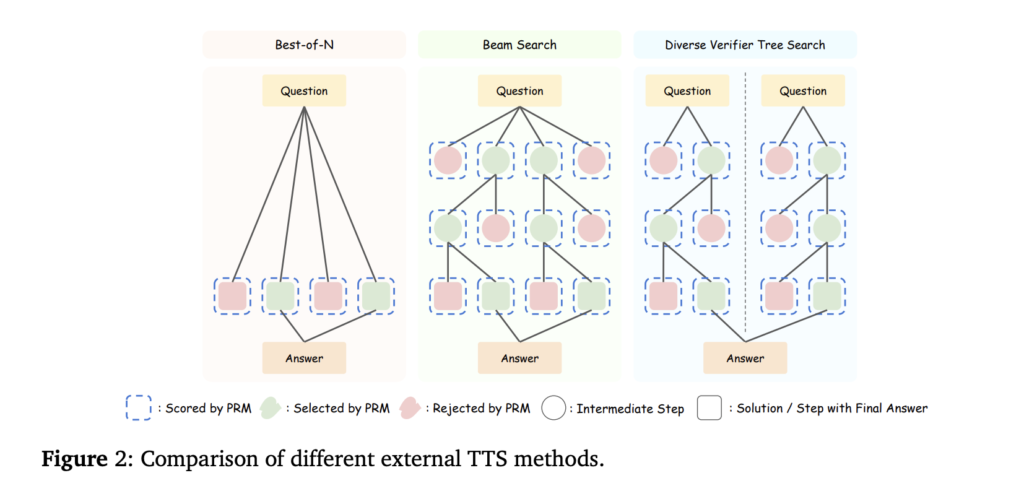

Can 1B LLM Surpass 405B LLM? Optimizing Computation for Small LLMs to Outperform Larger Models

Source: MarkTechPost Test-Time Scaling (TTS) is a crucial technique for enhancing the performance of LLMs by leveraging additional...

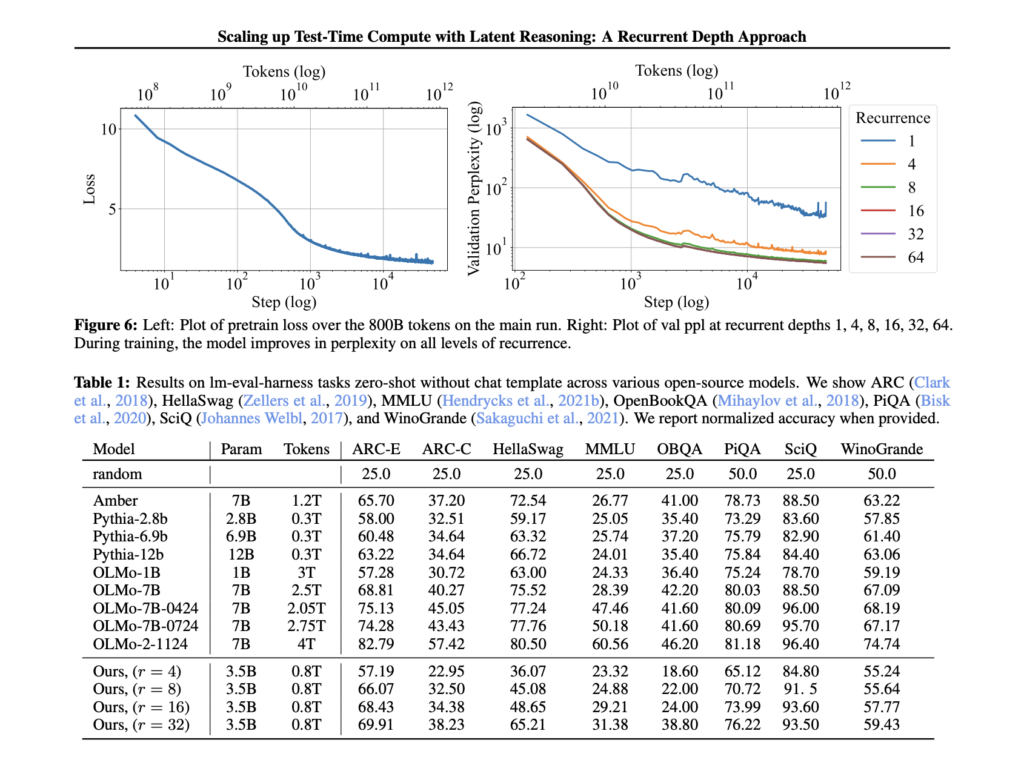

Meet Huginn-3.5B: A New AI Reasoning Model with Scalable Latent Computation

Source: MarkTechPost Artificial intelligence models face a fundamental challenge in efficiently scaling their reasoning capabilities at test time....