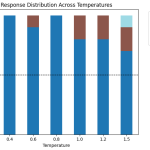

5 Common LLM Parameters Explained with Examples

Source: MarkTechPost Large language models (LLMs) offer several parameters that let you fine-tune their behavior and control how...

How to Build, Train, and Compare Multiple Reinforcement Learning Agents in a Custom Trading Environment Using Stable-Baselines3

Source: MarkTechPost In this tutorial, we explore advanced applications of Stable-Baselines3 in reinforcement learning. We design a fully...

A New AI Research from Anthropic and Thinking Machines Lab Stress Tests Model Specs and Reveal Character Differences among Language Models

Source: MarkTechPost AI companies use model specifications to define target behaviors during training and evaluation. Do current specs...

Liquid AI’s LFM2-VL-3B Brings a 3B Parameter Vision Language Model (VLM) to Edge-Class Devices

Source: MarkTechPost Liquid AI released LFM2-VL-3B, a 3B parameter vision language model for image text to text tasks....

An Implementation on Building Advanced Multi-Endpoint Machine Learning APIs with LitServe: Batching, Streaming, Caching, and Local Inference

Source: MarkTechPost In this tutorial, we explore LitServe, a lightweight and powerful serving framework that allows us to...

Salesforce AI Research Introduces WALT (Web Agents that Learn Tools): Enabling LLM agents to Automatically Discover Reusable Tools from Any Website

Source: MarkTechPost A team of Salesforce AI researchers introduced WALT (Web Agents that Learn Tools), a framework that...

Google AI Introduces FLAME Approach: A One-Step Active Learning that Selects the Most Informative Samples for Training and Makes a Model Specialization Super Fast

Source: MarkTechPost Open vocabulary object detectors answer text queries with boxes. In remote sensing, zero shot performance drops...

UltraCUA: A Foundation Computer-Use Agents Model that Bridges the Gap between General-Purpose GUI Agents and Specialized API-based Agents

Source: MarkTechPost Computer-use agents have been limited to primitives. They click, they type, they scroll. Long action chains...

Anthrogen Introduces Odyssey: A 102B Parameter Protein Language Model that Replaces Attention with Consensus and Trains with Discrete Diffusion

Source: MarkTechPost Anthrogen has introduced Odyssey, a family of protein language models for sequence and structure generation, protein...

PokeeResearch-7B: An Open 7B Deep-Research Agent Trained with Reinforcement Learning from AI Feedback (RLAIF) and a Robust Reasoning Scaffold

Source: MarkTechPost Pokee AI has open sourced PokeeResearch-7B, a 7B parameter deep research agent that executes full research...